Binciki daruruwan amfanin da aka shirya don Claude, Cursor, da ƙari.

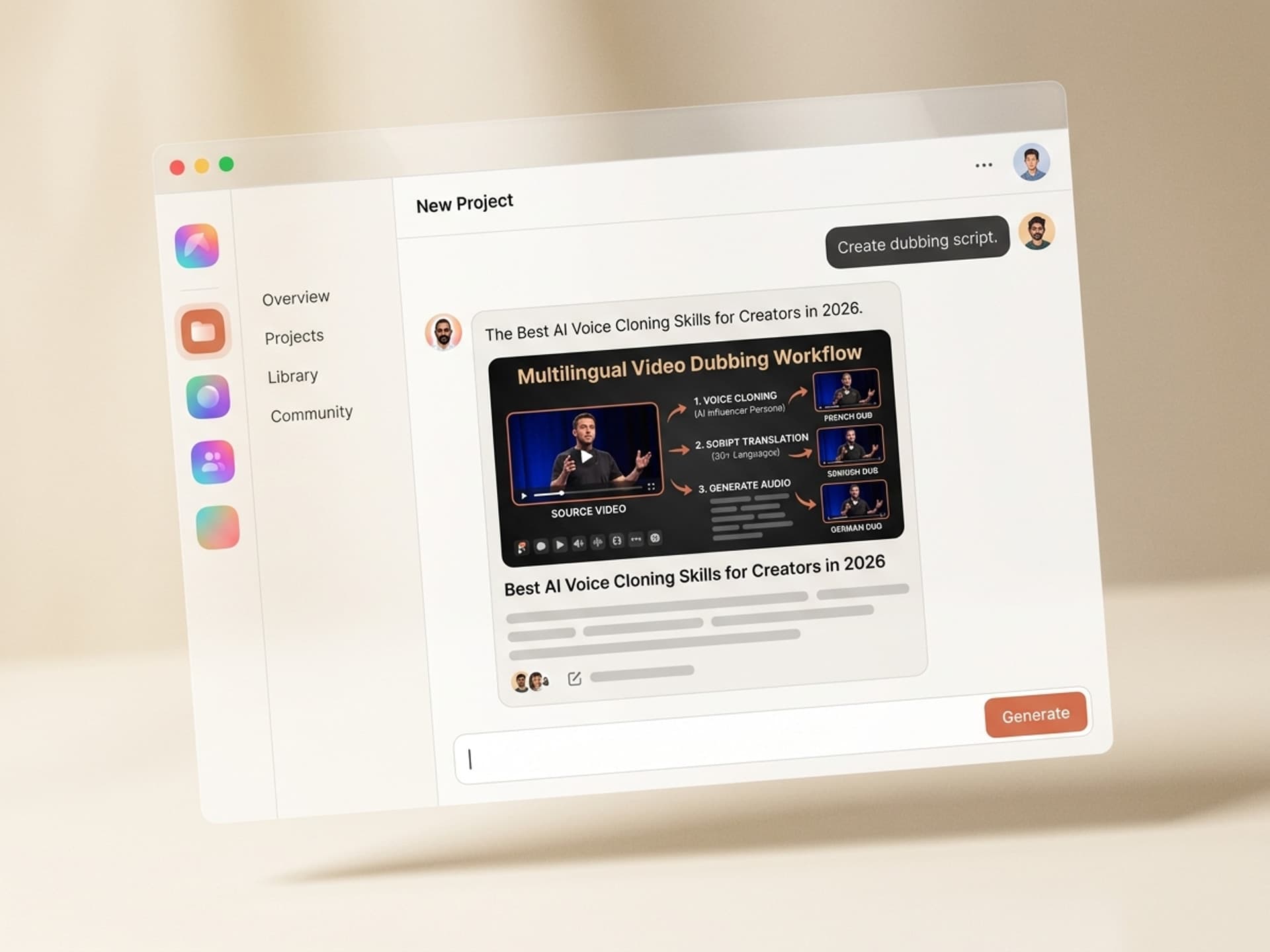

The Best AI Voice Cloning Skills for Creators in 2026

AI voice cloning lets one creator publish in 30+ languages, ship daily AI persona content, and turn a podcast into a 24/7 production line - using a 30-second sample of their own voice. ElevenLabs leads the commercial market with sub-second latency and 70+ languages, but the workflow around it (library setup, dubbing, brand voice consistency, ethics disclosure) is fragmented across five tools. AI voice cloning skills package the whole pipeline into one install, so creators stop wiring tools together and start shipping. The fastest way to start is to grab a ready-made voice skill from Vibe Skills.

This is a creator's playbook, not a tooling roundup. Real podcasters, YouTubers, and AI persona builders are using voice clones to ship more content in more languages without hiring a studio - and the gap between "early adopter" and "everyone does this" is closing fast.

Binciki daruruwan amfanin da aka shirya don Claude, Cursor, da ƙari.

Why Voice Is the Bottleneck for AI Persona Growth

For most creators, the visual side of AI content is solved. Image and video models hit photorealistic quality in 2025. But voice is what makes a persona feel real - and voice is where the workflow breaks.

The bottleneck shows up in three places:

- Production speed. Recording 20 minutes of clean voiceover takes 60 - 90 minutes of studio time once you account for setup, retakes, and editing. Multiply that by daily Shorts and you lose the week.

- Language reach. A creator who only speaks English caps their TAM at roughly 1.5 billion people. With dubbed audio in 10 languages, that number jumps to over 5 billion potential viewers. YouTube has been leaning hard into multi-language audio tracks since late 2024 - MrBeast's dubbed channels collectively pull more views than his English channel.

- Persona consistency. AI personas need a voice that sounds the same on Tuesday as it did three months ago. Hiring a voice actor for a daily AI character costs $300 - $800 per session and breaks the second they get sick or raise rates.

ElevenLabs reported 2.5 million voices cloned on its platform in 2024 alone. The market is forecast to hit $5.4 billion by 2032, growing at 26% CAGR. The reason is simple: voice cloning collapses the audio production cost from "studio session" to "API call" while keeping the output indistinguishable from human in blind tests.

What's missing is the workflow layer on top of the model - and that's where AI skills come in.

Binciki daruruwan amfanin da aka shirya don Claude, Cursor, da ƙari.

Voice Cloning Use Cases for Creators

Voice cloning is not one feature. It's a stack of use cases that compound when you run them together. Here's where creators are actually getting paid in 2026:

| Use case | What it replaces | Real time saved |

|---|---|---|

| Multi-language video dubbing | $2,000 - $5,000 per language per hour with a human studio | Translate + dub a 10-minute video into 8 languages in under 30 minutes |

| AI persona narration | $300 - $800 per voice actor session, $30K+ per year for daily content | Ship 30 days of AI persona Reels in one afternoon |

| Podcast assistant voice | A second host or producer ($50K+ per year) | Generate intros, outros, ad reads, and segment transitions on demand |

| Audiobook + course narration | $200 - $400 per finished hour for a freelance narrator | Narrate a 6-hour course in one batch render |

| Newsletter audio versions | Skipping audio entirely (most creators do) | Auto-generate a podcast feed from every newsletter post |

| Live event personalization | Generic pre-recorded voicemails | Send 1,000 personalized audio messages to attendees in your own voice |

The economics flip at the second use case. One creator doing dubbing alone breaks even fast. A creator running dubbing + persona + podcast + course narration on the same voice library pays back the entire AI stack in a single Shorts cycle.

The catch is operational, not technical. Most creators try to wire ElevenLabs + a translation tool + a video editor + a podcast platform manually - and quit after two weeks. AI skills solve that.

Browse AI Influencer Skills on Vibe Skills →

The Voice Cloning Tool Landscape in 2026

Quick context on the underlying tools so the skill recommendations make sense. Creators don't need to learn all of these - the skills wrap them.

| Tool | Best for | Languages | Voice clone quality |

|---|---|---|---|

| ElevenLabs | Highest fidelity, podcast and persona work | 70+ | Industry leader. Instant clone from 30s, professional clone from 30 minutes |

| Descript Overdub | Editing existing recordings, podcast cleanup | English-first | Good for fix-ups, weaker for full generation |

| OpenAI Voice Engine | Conversational AI, long-form responses | 50+ | High quality, restricted access (waitlist) |

| Google Vertex AI / Chirp | Enterprise dubbing, YouTube auto-dub | 100+ | Strong on accent transfer, weaker on emotional nuance |

| Resemble AI | Real-time voice cloning, gaming, NPCs | 60+ | Strong real-time API, used in interactive products |

ElevenLabs is the default for creators in 2026. It hit sub-300ms latency in 2025, supports voice cloning from a 30-second sample, and now ships native multilingual dubbing that preserves the speaker's voice across languages. Most of the AI voice cloning skills on the marketplace use ElevenLabs as the primary engine and bolt on the workflow layer.

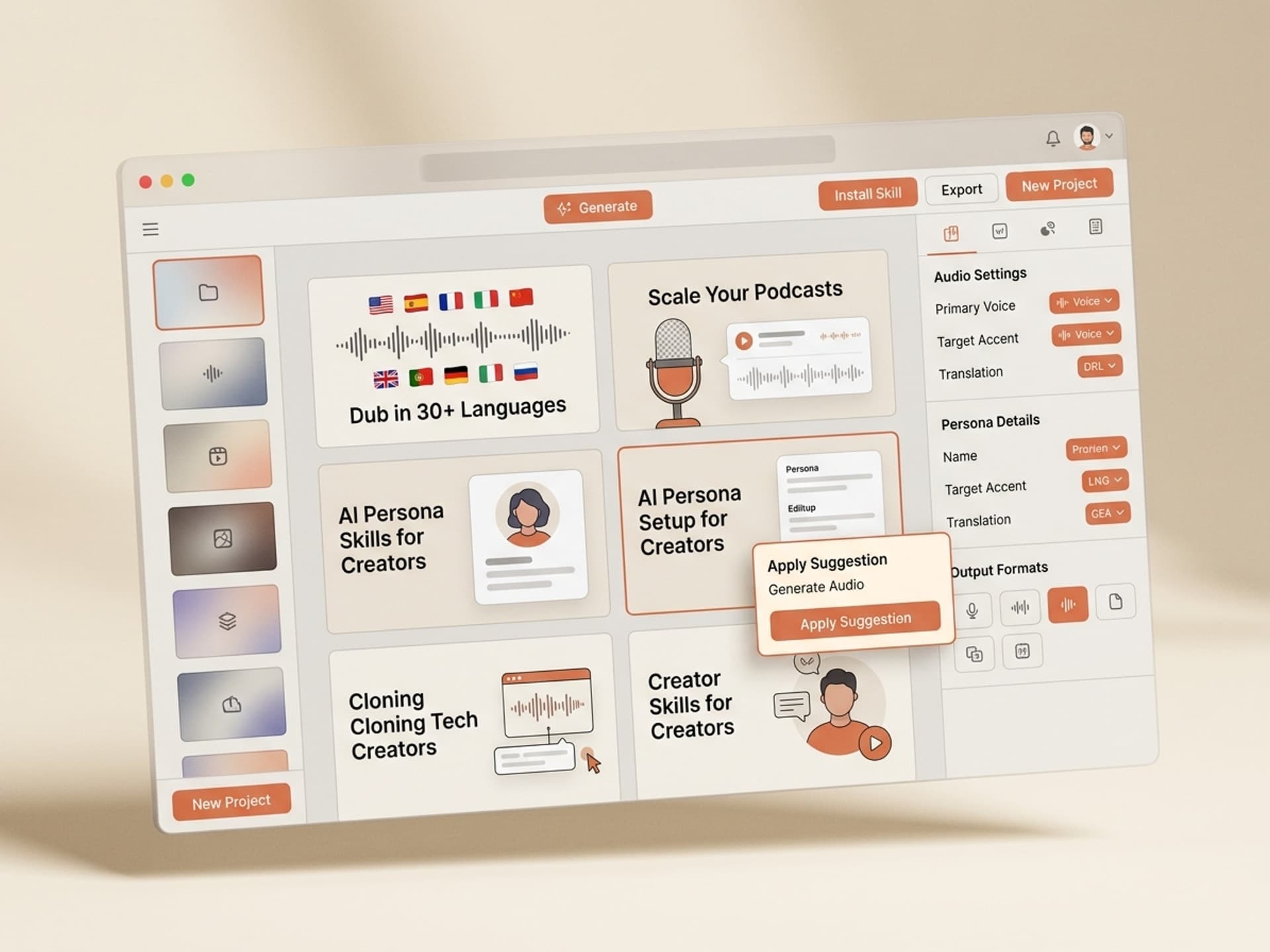

5 AI Voice Cloning Skills on Vibe Skills

Each of these is a packaged workflow - not just a setup checklist. Install one, plug in your voice sample, and ship.

| Skill | Best for | What it includes |

|---|---|---|

| Multi-Language Video Dubber | YouTubers, course creators, social video | Auto-detect source language, translate, generate dubbed track in your cloned voice across 30+ target languages, lipsync optional |

| AI Persona Narrator Kit | AI influencer builders, virtual model creators | Full voice library setup, brand voice rules, intro / outro / hook templates, content cadence presets |

| Podcast AI Co-Host | Podcasters, newsletter audio creators | Cloned voice + content brief input, generates ad reads, segment transitions, episode summaries, social pull quotes |

| Audiobook + Course Narrator | Course creators, indie authors, educators | Batch narration of long-form scripts with consistent pacing, chapter break detection, pronunciation library for technical terms |

| Voice Identity Kit | Solo creators, freelancers, founders | Sets up cloned voice + brand voice rules + 50 reusable audio snippets (CTAs, intros, voicemails, social hooks) |

All five live in the AI Influencers category on Vibe Skills, alongside full identity kits (face, voice, content pillars). Subscribers install unlimited skills - so most creators stack 2 - 3 of these for their persona.

Browse AI Influencer Skills on Vibe Skills →

Clone Your Voice in 30 Minutes (Step by Step)

Here's the actual workflow. End to end, including ethics setup, in under 30 minutes the first time.

Step 1: Pick the right skill on Vibe Skills

Open the AI Influencers category, pick the workflow that matches your use case (Voice Identity Kit if you're starting from zero, Multi-Language Video Dubber if you already publish video), and install it. Each skill ships with a setup checklist, an ElevenLabs config, and a brand voice template.

Step 2: Record your voice sample

You need 30 seconds of clean audio for a fast clone, or 30 minutes for a professional clone. Record in a quiet room with a USB mic (a $79 Samson Q2U is enough). Speak naturally - read a paragraph, tell a 90-second story, then record 5 different emotional reads (excited, calm, serious, friendly, curious).

Step 3: Upload + train the voice

The skill walks you through ElevenLabs voice creation: instant clone for fast turnaround, professional clone for the highest fidelity. Training takes between 30 seconds (instant) and a few hours (professional). Name your voice clearly - "Elena Brand Voice 2026" - so your library stays organized.

Step 4: Set brand voice rules

This is the step every creator skips and every creator regrets. Inside the skill, you fill out a brand voice spec: pace (slow / natural / energetic), tone (warm, authoritative, playful), filler words to allow or block, pronunciation rules for product names. The skill saves these rules and applies them to every render.

Step 5: Generate your first asset

Pick the format from the skill: dubbed video track, podcast intro, AI persona Reel script, course chapter narration. Paste your text, hit render, get an audio file in seconds. Most skills export directly to MP3, WAV, or a video file with the new audio track baked in.

Step 6: Add the disclosure

For any output where viewers might mistake the AI voice for a human, add a disclosure. The skill ships with disclosure templates ("This audio uses an AI voice clone of the creator") and the recommended placement (video description, podcast show notes, social caption). This isn't optional - see the ethics section below.

Step 7: Ship + reuse

Save the rendered file to your library. The skill keeps a versioned history so you can re-render the same script in a new language, swap the voice, or update the script without losing the voice settings. Most creators set up a "voice library" inside Notion or Frame.io and pull from it for every campaign.

Ethics, Consent, and Disclosure (The Part Everyone Skips)

Voice cloning is the most ethically loaded category in AI right now. Three rules keep you out of trouble - and on the right side of platform policies, regulators, and your audience.

Clone only your own voice. Or get explicit, written consent from the person whose voice you're cloning. The FTC fined the maker of an AI voice service $25M in 2024 for non-consensual voice cloning. The EU AI Act classifies non-consensual voice clones as a high-risk system. Your podcast guest, your colleague, your favorite YouTuber - none of them are fair game without a signed release.

Disclose AI-generated audio. Add a clear note in the video description, podcast show notes, or social caption ("AI voice clone of the creator"). YouTube's responsible AI labeling rule went live in 2024 and applies to any synthetic voice that could be mistaken for a real person. Meta and TikTok now auto-detect and label AI audio - but doing it yourself looks more credible than letting the platform do it for you.

Never impersonate real people - especially public figures. Cloning a politician, a celebrity, or any real third party for satire, advertising, or persona content is a fast track to a takedown, a defamation suit, or worse. The 2024 FCC ruling makes AI-generated robocalls using cloned political voices illegal in the US. Don't go near it.

The good news: every legitimate voice cloning skill on Vibe Skills bakes consent verification, disclosure templates, and platform policy alignment into the workflow. That's part of what you're paying for.

Frequently Asked Questions

Is AI voice cloning legal for creators?

Yes - as long as you only clone your own voice or have written consent from the speaker. Cloning a public figure or a third party without consent is illegal in most jurisdictions and a violation of every major platform's terms of service. The skills on Vibe Skills ship with consent templates and disclosure guidance to keep you compliant.

How good is AI voice cloning quality vs human in 2026?

Top-tier voice clones from ElevenLabs and Vertex AI Chirp pass blind tests at over 80% indistinguishability for short-form audio. For long-form (30+ minutes uninterrupted), human narration still has a slight edge on emotional nuance and breath control - but the gap closes every quarter. For most creator use cases (Reels, Shorts, podcast intros, dubbing), AI quality is good enough that audiences don't notice.

Can I use voice cloning for podcasts?

Yes, and it's one of the highest-ROI use cases. Use a cloned voice for ad reads, episode intros, outros, segment transitions, and pull quotes - keeping your real voice for the main interview content. Some creators use a full AI co-host. The Podcast AI Co-Host skill on Vibe Skills handles the whole stack: voice clone, brief input, automated segments, and direct export to your podcast host.

How much does it cost to run a voice cloning workflow?

ElevenLabs pricing starts at $5/month for hobby use and scales to $99/month for the Creator tier (which most pro creators use). A Vibe Skills subscription on the Pro plan is $39/month and includes unlimited voice cloning skills plus the rest of the catalog. Total stack cost for a working creator: under $150/month. Compare that to one freelance dub session at $2,000+ and the math is brutal.

Will my audience care that I'm using AI voice?

Most won't notice if the workflow is dialed in. The audience cares about three things in this order: is the content good, is the creator authentic, is there a disclosure. Disclose the AI voice clearly and you preserve trust. Hide it and you'll lose the audience the moment they find out - which they will. Studies from 2025 found that audiences punish hidden AI use 3x harder than disclosed AI use.

What's the difference between voice cloning and AI voiceover?

AI voiceover uses a stock voice from a library (ElevenLabs, OpenAI TTS, Google Cloud TTS). Voice cloning generates audio in your voice (or a consenting speaker's voice) from a sample. For brand consistency, voice cloning wins. For one-off generic narration, stock AI voiceover is fine and slightly cheaper.

Can I dub my YouTube videos into other languages with my own voice?

Yes - this is the #1 use case in 2026. The Multi-Language Video Dubber skill on Vibe Skills takes your source video, transcribes the audio, translates it into your target languages, and generates dubbed tracks in your cloned voice across 30+ languages. YouTube's multi-language audio feature lets you upload all the tracks to a single video so each viewer hears their own language automatically.

The Bottom Line: Voice Is the New Distribution Channel

In 2026, every creator who isn't using voice cloning is leaving a major distribution channel on the table. Multi-language reach, daily AI persona content, podcast scaling, course narration - these aren't experimental anymore. They're the baseline for serious creators.

The right move isn't to learn five tools and wire them together. It's to install one skill that wraps the workflow, plug in your voice sample, and ship. AI voice cloning skills on Vibe Skills handle the ElevenLabs setup, the brand voice rules, the dubbing pipeline, the disclosure templates, and the export formats - so you stay in creator mode instead of operator mode.

Browse voice cloning + AI persona skills on Vibe Skills →

Skip the studio. Ship in your voice, in every language. Install an AI voice cloning skill on Vibe Skills.